Lecture 17: Deep generative models (part 1)

Overview of the theoretical basis and connections of deep generative models.

In this lecture, we will bring an overview of the theoretical basis and connections between several popular generative models.

Theoretical Basis of deep generative models

Deep Generative Models

GANs and VAE models are heard by many as well as considered by some as one of the most exciting development in machine learning or at least in deep learning over the past decade, and you will see a reason today. But in terms of mathematics, they are not so complicated.

There are deep connections between almost every piece of deep learning and their counterpart machine learning method invented decades ago. For example, deep neural network corresponds to infinite deep computing graph of a one-layer RBM, and infinitely wide deep neural network is actually a finite width Gaussian process.

Deep generative models

- Define probabilistic distributions over a set of variables;

- Deep means multiple layers of hidden variables. (So it is a graphical model.)

Early forms of deep generative models

To ground to a few concrete example, we introduce some early forms of deep generative models.

- Hierarchical Bayesian models

-

Sigmoid brief nets

We have learned already sigmoid belief network where the lower layer is conditioning on the previous layer using a conditional distribution defined by a sigmoid function. The lower layer follows a Bernoulli distribution whose parameters are dependent and defined by the sigmoid function. See details below.

Sigmoid brief nets Deep belief network is one of the first deep learning models in its current sense. Train block-wise RBMs and stack them on top of each other and then retrain them using auto-regressive functions or some other heuristics.

Graphical models have mathematical properties to tell you the behavior, and then the deep learning model/technique is a heuristic extension of that. (The very word ?model? itself is not very proper sometimes because sometimes it is only a technique built on a principled model.)

-

- Neural network models

-

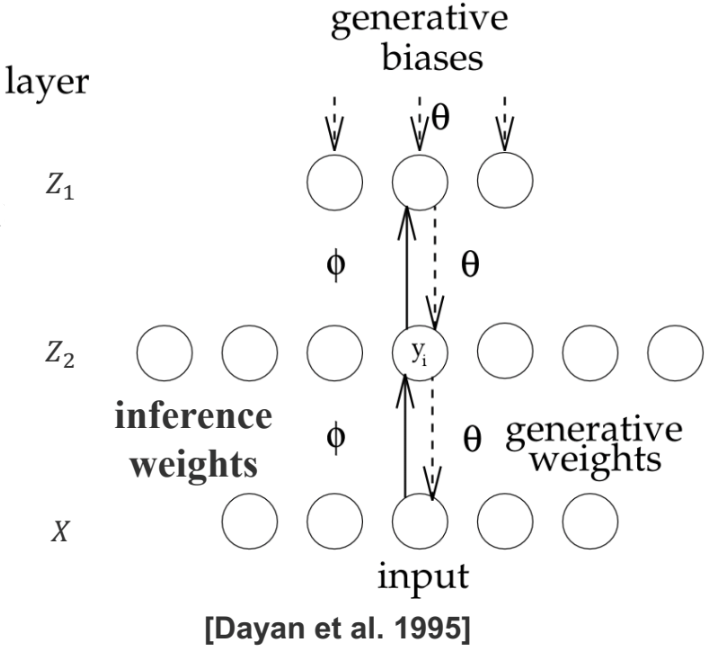

Helmholtz machines

: alternative inference/learning methods The model is just an undirected Markov random field of multiple layers. The interesting point is that, the model is learned in a particular way. Inference of large graphical model usually involves a two-passage process. For example, in EM algorithm, we approximate the hidden variables and re-estimate the parameters. In variational inference, we introduce what is known as variational approximation to approximate the posterior distribution, and then impute the hidden variables, and still go to the M-step.

Helmholtz machine essentially takes the approach of using a special variational approximation which is a new model called inference model that you basically learn

p andq separately and useq to impute hidden variables. So it is an alternative inference or learning method, whose parallel is MCMC and other similar technique. It is not a different model.

Helmholtz machines -

Predictability minimization

: alternative loss functions Ideally hidden variables should be independent to each other; one way to quantify their dependence is using each of the random variables to predict the other random variables.

There is a competition between two forces: you want to learn a model such that the set of random variables are able to predict the remaining ones; one the other hand, the remaining ones are taking the value that makes it very hard to predict.

Schmidhuber thought the idea behind the GAN model is no difference from this PM machine, because it is about predictability and able to tell truthfully tell whether it is a real or fake source.

-

The word model is here not very rigorous anymore! Sometimes the model is the same, but depending on what technique you use you get very different things.

-

- Training of DGMs via an EM style framework

- Sampling / data augmentation

\begin{aligned} &\boldsymbol{z} = \boldsymbol{z}_1 + \boldsymbol{z}_2, \\ &\boldsymbol{z}_1^{new} \sim p(\boldsymbol{z}_1|\boldsymbol{z}_2, \boldsymbol{x}), \\ &\boldsymbol{z}_2^{new} \sim p(\boldsymbol{z}_2|\boldsymbol{z}_1^{new}, \boldsymbol{x}). \end{aligned} -

Variational inference

and the objective is

\max_{\theta, \phi}\mathcal{L}(\theta, \phi; x). - Wake sleep: variational inference does one relaxation to the true loss. Wake sleep does one more relaxation. Minimize two losses and they converge somewhere eventually, but we don’t actually know what they are. Pure procedure rather than specific algorithm.

\begin{aligned} &\text{Wake Phase}: \max_{\theta}\mathbb{E}_{q_{\phi}(z|x)}\left[ \log p_{\theta}(x|z)\right];\\ &\text{Sleep Phase}: \max_{\phi}\mathbb{E}_{q_{\phi}(z|x)}\left[ \log p_{\theta}(x|z)\right]. \end{aligned}

Resurgence of deep generative models

- Restricted Boltzmann machines (RBMs)

- Building blocks of deep probabilistic models

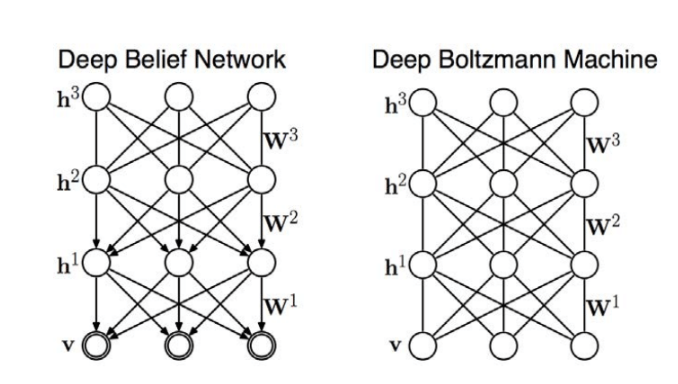

- Deep belief networks (DBNs)

- Hybrid graphical model

- Inference in DBNs is problematic due to explaining away

- Deep Boltzmann Machines (DBMs)

-

Undirected model

-

-

Variational autoencoders (VAEs)

Neural Variational Inference and Learning (NVIL)

In fact, what VAE is doing is very similar to Helmholtz machine. For both there is a generative model and a inference model. Helmholtz machine directly solves the inference model using wake-sleep algorithm, while VAE introduces another technique to simplify the procedure so that you don?t have to solve the second relaxation. It is still solving the same lower bound of the data likelihood, but you are going to constrain the inference model so that it is easier to solve. Not a new model but an inference algorithm.

-

Generative adversarial networks (GANs)

Instead of having explicit hidden variables and conditional distributions, it uses something fancier. To parts of GAN:

- Stochastic noise

Z andZ go through a deterministic transitionG - A discriminator

D which qualifies the resultant samples, such that it wants the fake samples to be distinguished from true data.

- Stochastic noise

- Generative moment matching networks (GMMNs)

- Autoregressive neural networks

Synonyms in the literature

Since people in research community, either intentionally or unconciously, always come up with new names for existing old stuff, so we make some clarifications here in order to help you link current knowledge to historical literatures of origins of these techniques.

Inference model

The inference model refers to learn the posterior distribution.

When estimating

- Variational approximation

- Recognition model

- Inference network (if parameterized as neural networks)

- Recognition network (if parameterized as neural networks)

- (Probabilistic) encoder

Generative model

The generative model usually include prior + conditional (or joint), it is naturally understood as a likelihood model. Synonyms of the generative model include

- The (data) likelihood model

- Generative network (if parameterized as neural networks)

- Generator

- (Probabilistic) decoder

Note that encoder and decoder is a pair, usually correspond to “visible to latent” and “latent to visible”, respectively.

Recap of Variational Inference

Variational Lower Bound

Variational inference is a way to approximate the posterior

which is also equivlent to mimizing the free energy

Note that the KL divergence term in free energy will vanish if your approximation is equivalent to the true posterior.

Solve VI with EM

We can maximize the variational lower bound by EM steps. Specifically,

E-step: maximize

Note that if closed form solutions exist, then it takes

M-step: maximize

Variational inference for generative models are still considered numerically or algebrically difficult for many complex settings, and algorithms include Wake Sleep, VAEs, GANs, which we are going to introduce, are all relaxiation or surrogate of this.

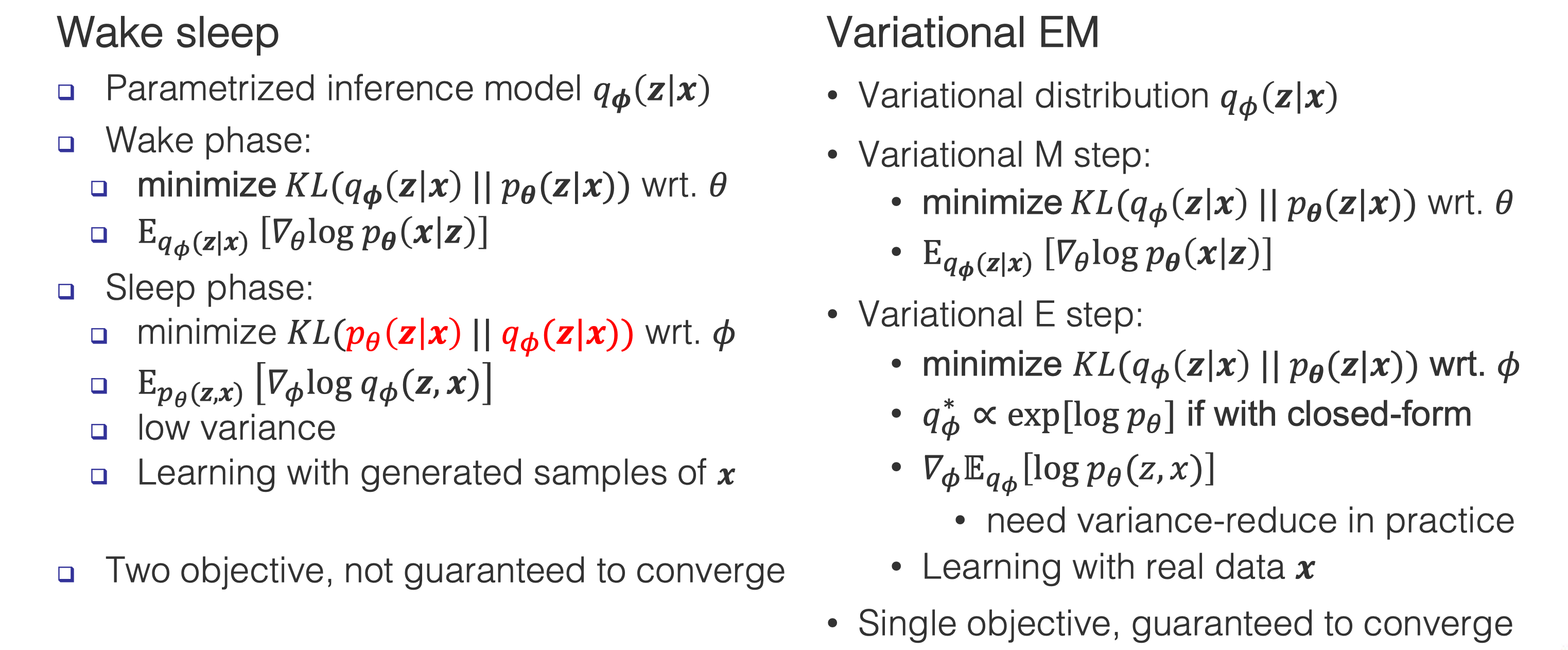

Wake Sleep Algorithm

While the variational inference performs one relaxation to the true loss, Wake sleep algorithm performs one more relaxation. Recall that the free energy is

Wake Phase

Wake Phase (correspond to the variational M step):

minimize the free energy

This equals to maximize the data likelihood

Typically: we get samples from

Sleep Phase

Sleep Phase (correspond to the variational E step):

minimize the free energy

While the Wake Phase simply involves a MLE estimation, however, the Sleep Phase runs into some difficulties since the parameter

Either a sampling technique or a specific deterministic approximation step, there is a log term

To deal with this issue, Wake-Sleep use a new trick that inverts the direction of KL. So the free energy becomes,

then we can alternatively mazimize

We need to “Dreaming” up samples

from

VI v.s. Wake-Sleep

Here is a comparision of variational inference and Wake Sleep algorithm:

Note that Wake-Sleep is not guaranteed to converge, since inverting the two terms in KL has no theoratically gaurantee. You can consider Wake-Sleep as a heuristic algorithm.

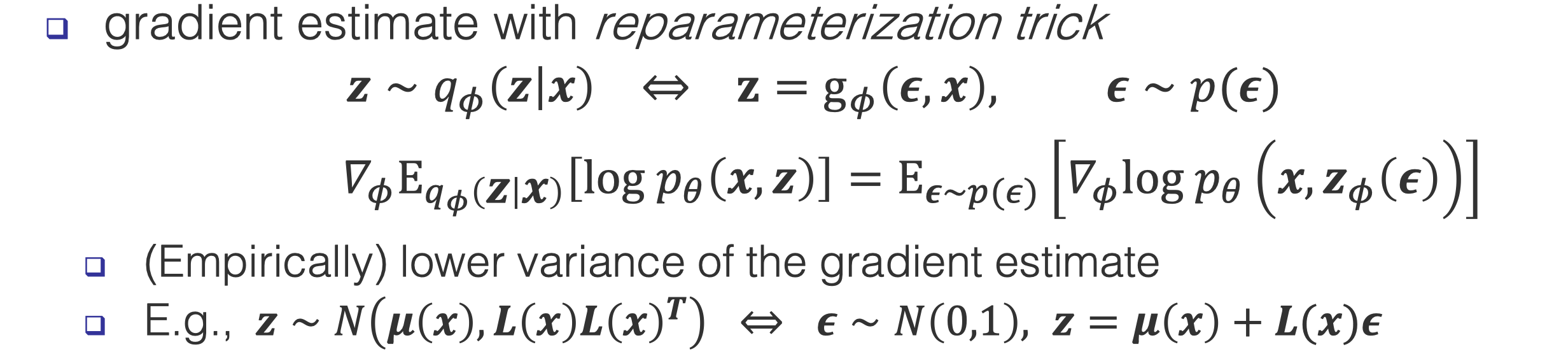

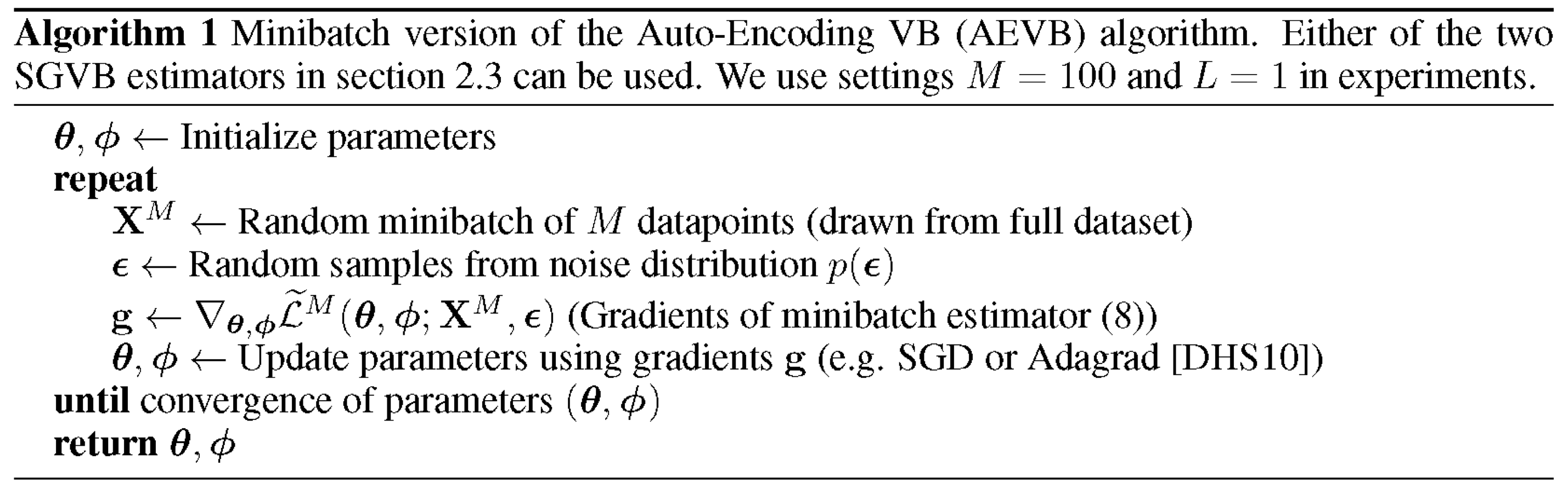

Variational autoencoders

VAEs uses variational inference with an inference model, it is similar to Wake-Sleep but they differ in estimating the inference model. As we said before, its hard to estimate the inference model due to high variance of the gradient. Here, instead of changing the loss function (as the new trick of Wake-Sleep), the author of VAEs used reparameterization trick to reduce variance. Other alternatives for reducing variance include using control variates as in reinforcement learning:

- Variational Bayesian inference with stochastic search

. - Neural variational inference and learning in belief networks

Reparameterization trick

Reparameterization trick assumes that the latent variables are resulted from a deterministic transformation of the inputs plus some noise. The deterministic transformation is a parameterization transformation that you can design, which makes calculating derivatives of everything much easier.

Algorithms

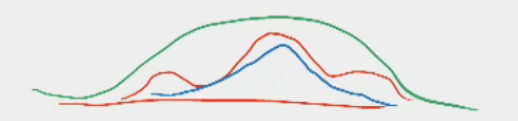

Applications: Blurred Generations

-

Computer Vision: Images generated by VAEs are always blurred due to the mode convering behavior, which is not favored. For eaxmple, celebrity faces generation

-

Natural Langugae Processing: The blurred generation is favored in NLP since these blurred interpolation might some interesting outputs. Ambiguity is appreicated in languages but not in images

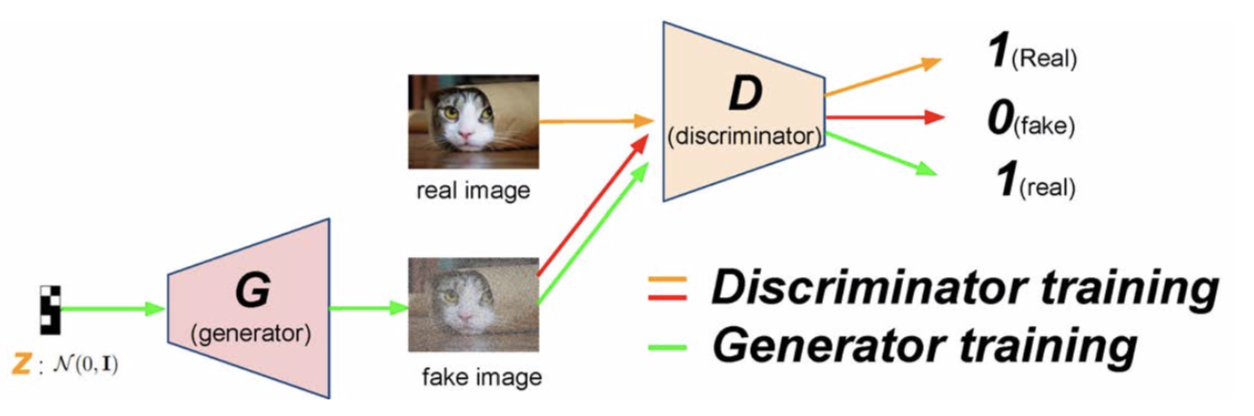

Generative adversarial networks

Generative adversarial networks (GANs) are composed of two models: the generative model (generator) and the discriminative model (discriminator). The generative model

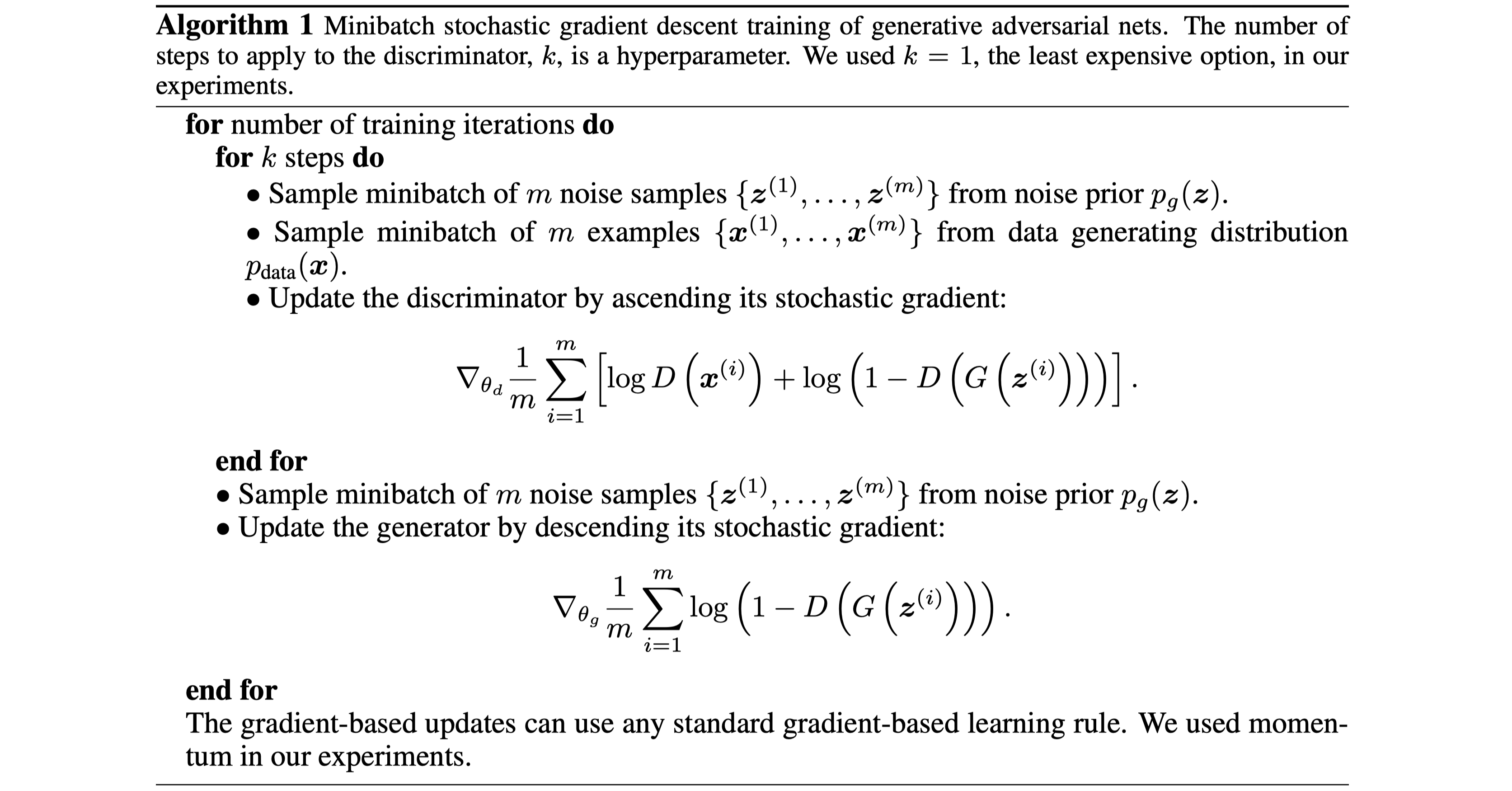

Learning GANs

The discriminator is trained to maximize the (log) probability of assigning correct labels to samples from the training dataset and samples generated from the generator. We use a cross-entropy-like objective function. This could be written as:

The generator is trained to fool the discriminator, i.e., to minimize

In the early training phase where

In short, learning GANs can be thought as a minimax game between the discriminator and the generator.

Note that the generator defines an implicit distribution

An example of optimization of GANs using stochastic gradient descent from

A unified view of deep generative models

For a unified view of deep generative models, we will reformulate GANs in the ‘variational-EM’ format. Let’s first define the conditional distribution of

where

Define the discrimnator distribution

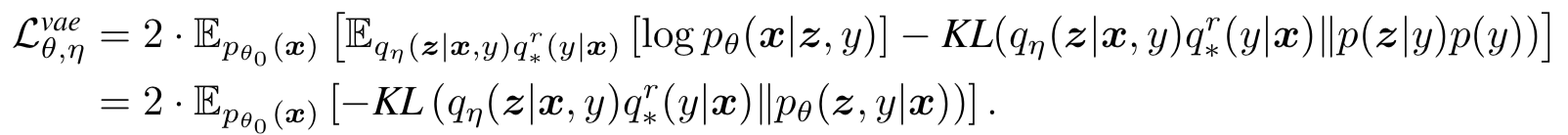

Gan v.s. Variational EM

Recall that in variational EM, we optimize one single objective

w.r.t to the inference parameter

Now consider the above new formulation for GAN, objectives could be written

Following the similar terms in variational EM, we could interpret the

In variational EM we minimize

let

we would have the equivalent update rule for

Lemma 1:

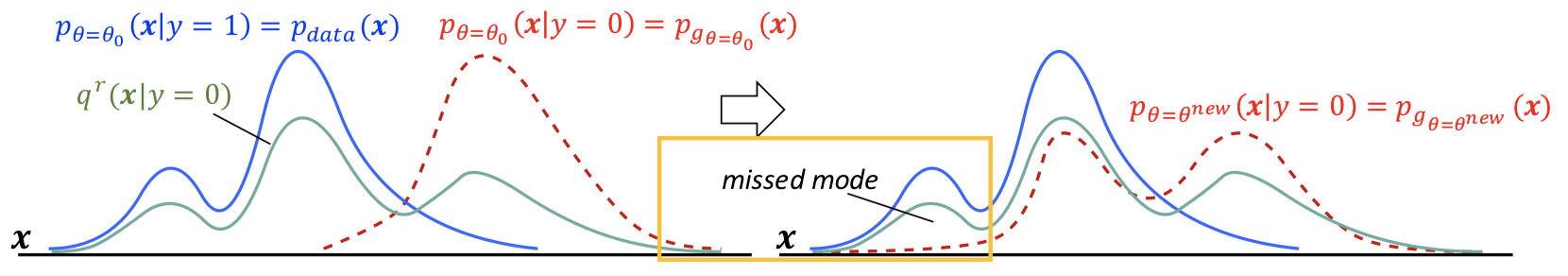

Here the generative model

and thus we could break the KLD into two terms:

where

could be seen as a mixture of

This KLD formulation allow GAN to recover the major modes, while also lead GAN to miss some minor modes of

GAN v.s. VAE

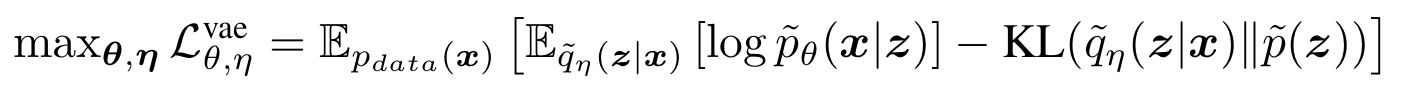

Recap the VAE objective

Similar to GAN,

we assum accordingly a perfect discriminator

where the posterior

is basically determined by the generative model

The below table summarizes the comparion.

VAE/GAN v.s. Wake-Sleep

Look back at the wake-sleep algorithm:

VAE only deals with the wake phase and extend it by also learning the inference parameter

GAN only deals with the sleep phase and extend it by also learning the generative parameter

References

- Connectionist learning of belief networks

Neal, R.M., 1992. Artificial intelligence, Vol 56(1), pp. 71--113. Elsevier. - The helmholtz machine

Dayan, P., Hinton, G.E., Neal, R.M. and Zemel, R.S., 1995. Neural computation, Vol 7(5), pp. 889--904. MIT Press. - Semilinear predictability minimization produces well-known feature detectors

Schmidhuber, J., Eldracher, M. and Foltin, B., 1996. Neural Computation, Vol 8(4), pp. 773--786. MIT Press. - Information processing in dynamical systems: Foundations of harmony theory

Smolensky, P., 1986. - A fast learning algorithm for deep belief nets

Hinton, G.E., Osindero, S. and Teh, Y., 2006. Neural computation, Vol 18(7), pp. 1527--1554. MIT Press. - Deep boltzmann machines

Salakhutdinov, R. and Hinton, G., 2009. Artificial intelligence and statistics, pp. 448--455. - Auto-encoding variational bayes

Kingma, D.P. and Welling, M., 2013. arXiv preprint arXiv:1312.6114. - Neural variational inference and learning in belief networks

Mnih, A. and Gregor, K., 2014. arXiv preprint arXiv:1402.0030. - Generative adversarial nets

Goodfellow, I., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., Courville, A. and Bengio, Y., 2014. Advances in neural information processing systems, pp. 2672--2680. - Generative moment matching networks

Li, Y., Swersky, K. and Zemel, R., 2015. International Conference on Machine Learning, pp. 1718--1727. - Training generative neural networks via maximum mean discrepancy optimization

Dziugaite, G.K., Roy, D.M. and Ghahramani, Z., 2015. arXiv preprint arXiv:1505.03906. - Variational Bayesian inference with stochastic search

Paisley, J., Blei, D. and Jordan, M., 2012. arXiv preprint arXiv:1206.6430. - Unsupervised representation learning with deep convolutional generative adversarial networks

Radford, A., Metz, L. and Chintala, S., 2015. arXiv preprint arXiv:1511.06434. - Generating sentences from a continuous space

Bowman, S.R., Vilnis, L., Vinyals, O., Dai, A.M., Jozefowicz, R. and Bengio, S., 2015. arXiv preprint arXiv:1511.06349.